The AI Business Case Blueprint

Why AI Gets Funded When Leaders Trust the Outcome

Artificial intelligence initiatives rarely fail because the technology is incapable. More often, they fail because the case for investment was never compelling to begin with. Organizations continue to approach AI funding as a technical problem, one that can be solved with better models, stronger architectures, or more sophisticated tooling. In reality, funding decisions are not made on technical merit alone. They are made on confidence.

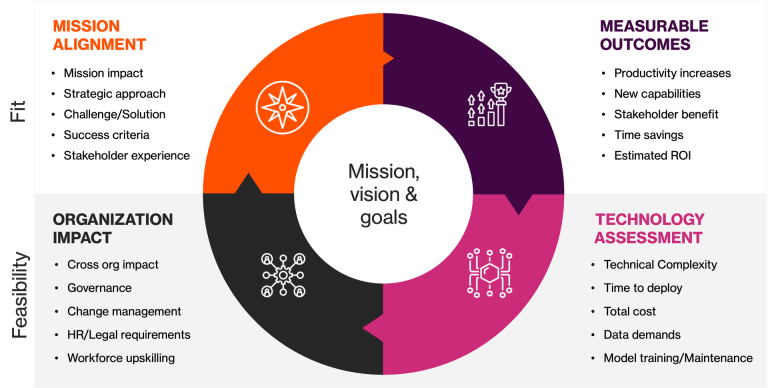

Executives fund initiatives when they believe three things to be true: that a real problem exists, that the proposed solution will materially improve the situation, and that the risks introduced by the solution are understood and manageable. An effective AI business case is not an exercise in explaining how AI works. It is an exercise in demonstrating why the organization will be better off after the investment than before it.

From Novelty to Necessity

Most AI proposals begin in the wrong place. They lead with the technology itself, describing models, capabilities, and abstractions, assuming that novelty will generate excitement. For decision-makers responsible for budgets, risk, and outcomes, novelty is rarely persuasive. What matters is necessity.

A credible business case starts by identifying a concrete business problem that already exists. This problem must be framed in terms the organization understands: lost revenue, operational inefficiency, scaling constraints, regulatory exposure, customer friction, or strategic disadvantage. It must also explain why the problem has become acute now, rather than remaining tolerable as it may have been in the past.

Only once the pressure is clearly established does AI become relevant. At that point, AI is no longer an experiment looking for justification. It becomes a response to an existing constraint. This shift, from showcasing capability to addressing necessity, is the first inflection point between an unfunded idea and a funded initiative.

Defining the AI System as a Role

Once the problem is clear, the next challenge is specificity. Many AI initiatives stall because they describe intelligence in broad, ambiguous terms. Phrases like “AI-driven insights” or “intelligent automation” obscure responsibility and make it difficult to evaluate impact.

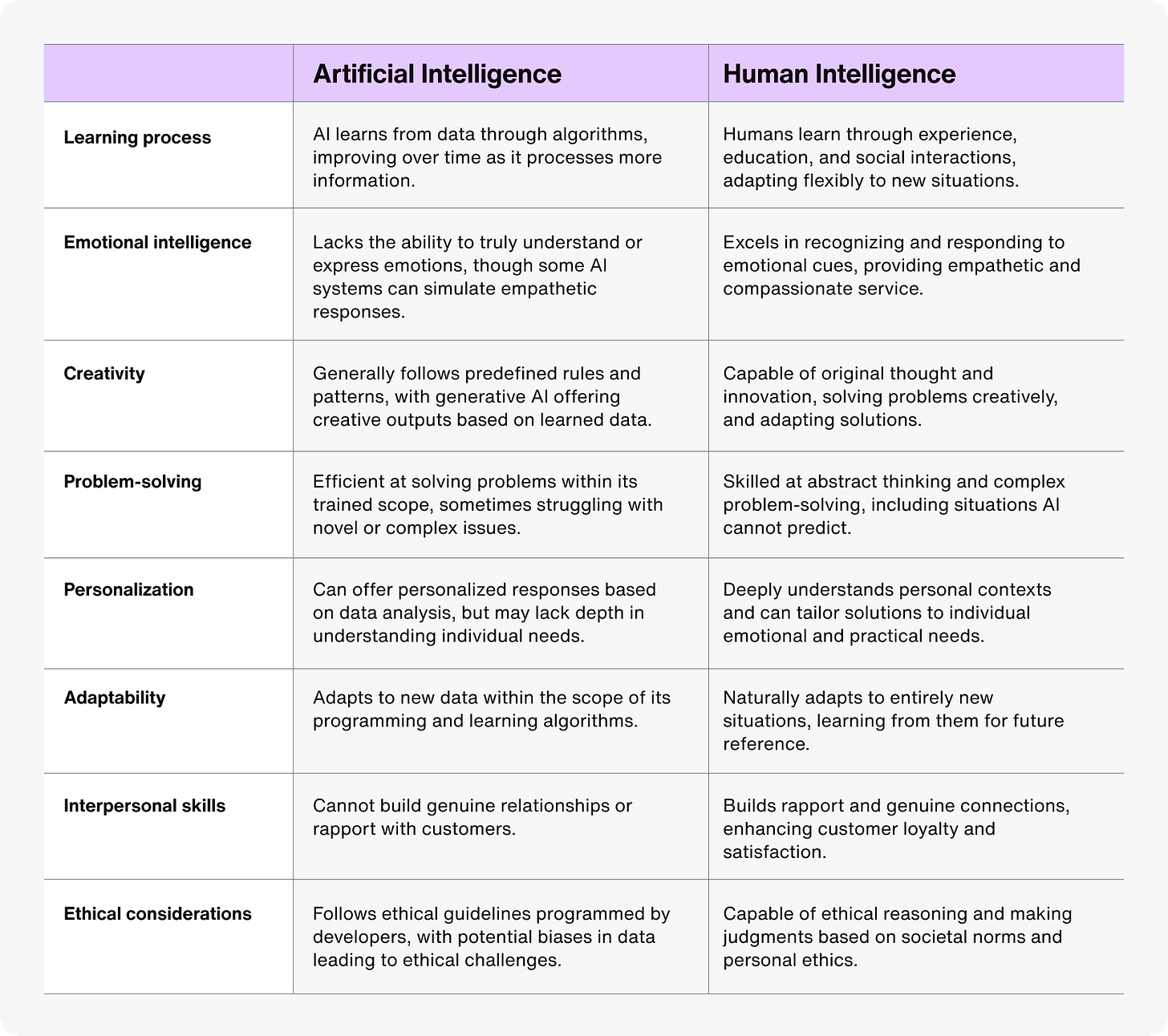

Successful business cases treat AI systems as if they were roles within the organization. They define what the system does, what decisions it is permitted to make, what actions it can take, and what data it is allowed to access. Just as importantly, they define what the system cannot do.

This framing changes the conversation. Instead of debating abstract capabilities, stakeholders can reason about scope, authority, and accountability. The AI system becomes legible. It can be governed, measured, and trusted. Clarity at this stage does more to unlock funding than any architectural sophistication introduced later.

Measuring Value Against Reality

No AI system exists in a vacuum. Every proposed initiative competes with an existing approach, whether that approach is a manual process, a legacy tool, or simple inaction. A strong business case confronts this reality directly.

Rather than positioning AI as transformational in the abstract, effective proposals compare it rigorously against the status quo. They examine how long tasks currently take, how often errors occur, how decisions are delayed, and where costs accumulate. They then show how the proposed system changes those dynamics in concrete terms.

This comparison does not need to be perfect. It needs to be honest. AI does not have to outperform humans in every dimension to justify investment. It only needs to produce meaningful improvement where it matters most. Executives fund change when the delta between today and tomorrow is unmistakable.

Treating ROI as a Trust Exercise

Return on investment is often where credibility is won or lost. Inflated projections and vague assumptions may look impressive, but they undermine trust. Leaders evaluating AI investments are not looking for optimistic numbers; they are looking for defensible ones.

A strong ROI model acknowledges costs openly, including implementation effort, operational overhead, adoption friction, and ongoing oversight. It makes assumptions explicit and ties expected benefits to metrics the organization already tracks. Where uncertainty exists, it is named rather than obscured.

This approach may feel conservative, but it signals seriousness. A business case that treats ROI as an exercise in transparency rather than persuasion invites confidence. And confidence, not enthusiasm, is what moves budgets.

Risk as a First-Class Concern

Every AI proposal carries risk, whether or not it acknowledges it. Data exposure, unintended actions, compliance implications, and operational failures are already on the minds of executives reviewing AI investments. When these concerns are absent from a proposal, they do not disappear; they simply become reasons to delay or reject funding.

The most credible business cases address risk directly. They explain how access is controlled, how behavior is monitored, how failures are detected, and how systems can be safely constrained or shut down if necessary. This is not about eliminating risk entirely. It is about demonstrating that risk is understood and managed deliberately.

By surfacing these considerations early, AI proposals shift from appearing reckless to appearing mature. Governance, in this context, becomes a signal of readiness rather than an obstacle to progress.

Governance as the Path to Scale

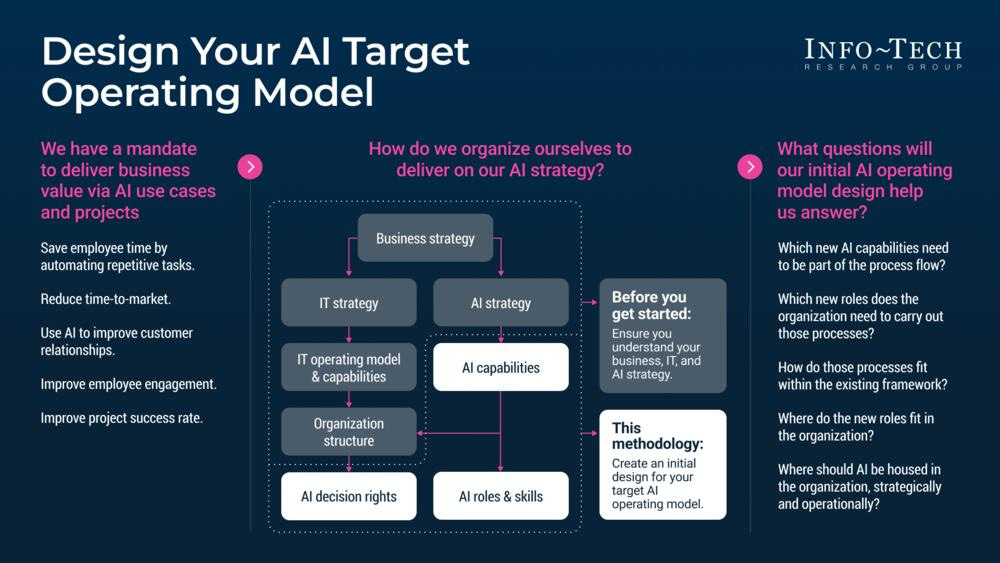

Many AI initiatives reach technical success but fail to transition into operational reality. They linger in pilot programs, producing value in isolation but never integrating fully into the organization. This outcome is rarely caused by technical shortcomings. It is caused by the absence of an operating model.

A fundable AI business case explains how the system will live in the organization over time. It defines ownership, accountability, performance measurement, and change control. It clarifies how the system will evolve without introducing chaos.

Governance, when treated as an afterthought, slows progress. When designed upfront, it enables scale. It provides a framework for trust, allowing organizations to move from experimentation to sustained operation without losing control.

What AI Business Cases Really Sell

In the end, AI proposals succeed or fail for reasons that have little to do with algorithms. They succeed when leaders believe the initiative will produce measurable improvement, introduce manageable risk, and strengthen the organization rather than destabilize it.

The purpose of an AI business case is not to celebrate technology. It is to demonstrate readiness. Readiness to solve a real problem. Readiness to operate responsibly. Readiness to scale without eroding trust.

When those conditions are met, funding follows naturally. Not because AI is impressive, but because the organization believes in the outcome. It’s our job as leaders to instill trust and bring the business on the journey of AI adoption.

This is the way. This is the blueprint.

![[cmd] + [opt] + <agent>](https://substackcdn.com/image/fetch/$s_!KhgZ!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F05bf85cf-79eb-44bb-93f2-40c252a80570_1280x1280.png)

![[cmd] + [opt] + <agent>](https://substackcdn.com/image/fetch/$s_!DtWi!,e_trim:10:white/e_trim:10:transparent/h_72,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F9bfce74e-ff19-4e94-852f-9f365b7cb3eb_3936x1088.png)