AI Is Not a Feature. It Is a System.

Why AI Demands Infrastructure Thinking for Success

For more than a decade, the technology industry has trained leaders to think in features. Innovation arrives as an incremental capability, shipped behind a toggle, measured by adoption, and quietly absorbed into the product. This mental model worked when software was largely deterministic and bounded. But applying it to modern artificial intelligence—especially agentic systems—is a category error. AI does not behave like a feature because it does not remain contained. Once deployed, it becomes part of the operational fabric of the organization, shaping decisions, influencing outcomes, and introducing new forms of risk that cannot be toggled off.

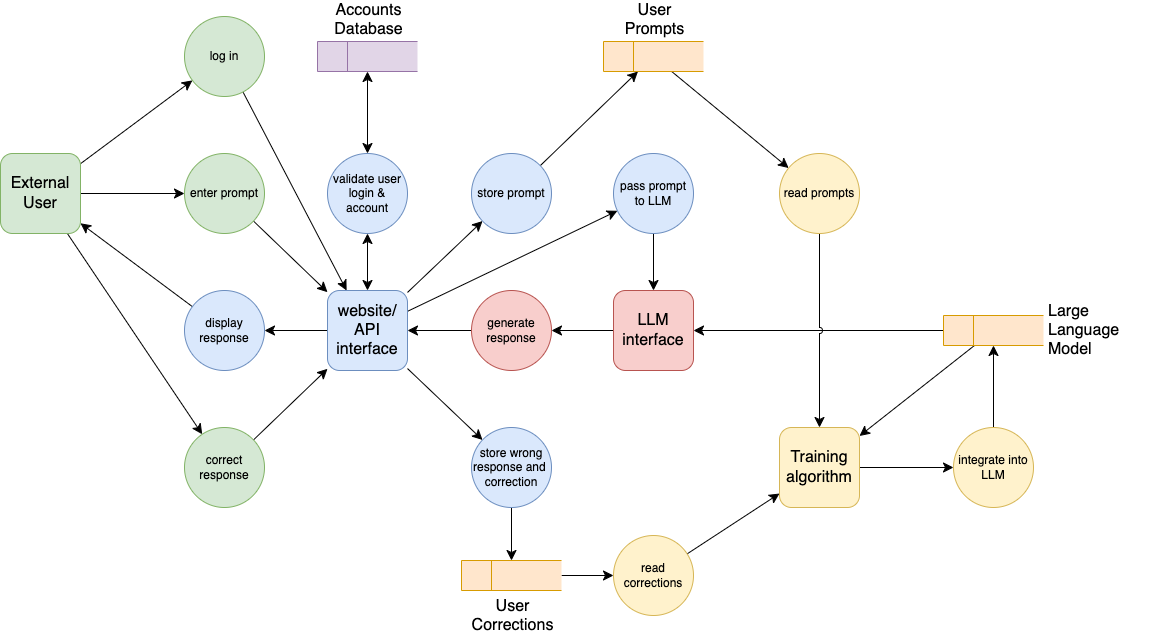

The idea that AI can be “added” to a product or process misunderstands what these systems actually are. Contemporary AI systems ingest vast amounts of context, retain state through memory and embeddings, and generate outputs that are probabilistic rather than fixed. More importantly, they participate in feedback loops. Their behavior changes based on interaction, data exposure, and optimization goals. This is the defining characteristic of a system, not a feature. Systems evolve. Features do not.

What truly distinguishes modern AI from previous waves of software is autonomy. These systems do not simply respond to inputs; they increasingly decide what to do next. They trigger actions, call tools, coordinate across services, and operate continuously without human supervision. Autonomy fundamentally alters the risk profile. A mistake made by an autonomous system does not wait for a meeting or a sprint cycle—it propagates instantly, often invisibly, and at scale. Yet many organizations deploy these systems with fewer controls than they would apply to a staging database or a junior employee.

This is why the most serious failures in AI adoption are rarely technical. They stem from leadership assumptions. When AI is framed as a feature, ownership becomes ambiguous. Responsibility diffuses across engineering, product, security, and compliance teams, none of whom are empowered to govern the system end to end. Decisions are optimized for speed and novelty rather than durability. Governance, if it appears at all, arrives after deployment, framed as a documentation exercise rather than a design discipline. By the time risk is visible, the system is already embedded.

Systems cannot be governed retroactively. They must be designed. Every mature organization understands this when it comes to infrastructure. No one would deploy a production payment system without explicit ownership, access controls, monitoring, and the ability to shut it down safely. AI systems deserve the same seriousness. They require defined authority, clear boundaries, continuous observability, and explicit failure modes. Without these, organizations are not innovating—they are gambling, often without realizing it.

The emergence of agentic AI makes this reality unavoidable. Agents are not passive models generating text. They plan, execute, and adapt. They operate across tools and systems, often chaining actions in ways that are difficult to predict in advance. At this point, the analogy to software breaks down entirely. An agent that can access data, modify systems, or influence customers is functionally equivalent to a non-human employee. And yet we deploy these agents without onboarding, without role definitions, without performance oversight, and without termination mechanisms. The discrepancy is not subtle. It is alarming.

Some organizations are already adjusting their mental model. They are moving away from feature-centric thinking toward infrastructure thinking. They treat AI as something that must be architected deliberately, governed continuously, and monitored as closely as any mission-critical system. They recognize that trust, compliance, and safety are not obstacles to innovation but prerequisites for scaling it. This shift is not driven by regulation or fear. It is driven by experience, often hard-earned.

The uncomfortable truth is that AI will not slow down to accommodate organizational confusion. It will not pause while governance frameworks catch up or leadership roles are clarified. Systems deployed without intent tend to reveal their weaknesses under pressure, and AI systems apply that pressure constantly. Treating AI as a feature may feel expedient, but it creates fragility. Treating AI as a system is slower at first, but it is the only approach that produces resilience.

The future will reward organizations that recognize this distinction early. Not because they avoided risk entirely, no system ever does, but because they understood the nature of what they were building. AI is not a clever enhancement. It is a living system inside your organization. The question is no longer whether you will deploy it, but whether you are designing it with the respect systems demand.

![[cmd] + [opt] + <agent>](https://substackcdn.com/image/fetch/$s_!KhgZ!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F05bf85cf-79eb-44bb-93f2-40c252a80570_1280x1280.png)

![[cmd] + [opt] + <agent>](https://substackcdn.com/image/fetch/$s_!DtWi!,e_trim:10:white/e_trim:10:transparent/h_72,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F9bfce74e-ff19-4e94-852f-9f365b7cb3eb_3936x1088.png)